Multi-Modal AI Model Infrastructure: Processing Text, Ima…

Quick Summary

- GPT-4V: Vision + language, requires 8+ H100 for serving

- Llava-Next: Open-source multi-modal, 4-8 GPUs for inference

- Video Understanding: 10-50x more compute than text-only models

- Storage: Multi-modal training data 100x larger than text datasets

- Architecture: Separate encoders per modality + fusion transformer

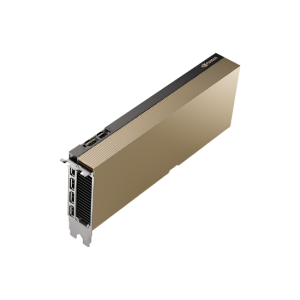

Infrastructure for Multi-Modal AI Model Multi-GPU servers

Multi-modal AI models—processing combinations of text, images, video, audio, and other data types—represent the next frontier of artificial intelligence. Models like GPT-4V, Llava-Next, ImageBind, and Gato require infrastructure that can handle heterogeneous data types, multiple encoder architectures, and fusion mechanisms that combine information across modalities.

Architecture Overview

Multi-modal models typically use separate encoder networks for each modality (a vision transformer for images, a text transformer for language, etc.) with a fusion layer that learns cross-modal relationships. This architecture creates distinct computational requirements for each modality encoder and additional requirements for the fusion mechanism. During inference, input modality determines which encoder activates—all audio inputs go through the audio encoder, while text inputs use the language encoder.

Memory and Compute Demands

Multi-modal models require significantly more GPU memory than single-modal equivalents. GPT-4V requires approximately 80-160GB of GPU memory depending on image resolution and batch size. Llava-Next 34B requires 70-140GB. The key driver is the need to hold multiple large encoders simultaneously in GPU memory, plus the fusion transformer and KV cache.

Government Intelligence Applications

Multi-modal AI is transforming intelligence analysis by enabling joint analysis of imagery, communications intercepts, documents, and signals data. A single model can analyze satellite imagery alongside intercepted communications and written intelligence reports, identifying cross-modal correlations that separate analysts might miss.

Related Content

Explore more about this topic:

- NVIDIA H200 NVL Deep Dive

- How Tensor Cores Accelerate Deep Learning

- NVIDIA B200 vs H100: Architecture Comparison

What GPU configuration is recommended for multi-modal models?

A minimum of 4x H100 GPUs with NVLink for production multi-modal serving. Training requires 32-512 GPUs depending on model size and data diversity. L40S provides a cost-effective alternative for inference-only deployments.

Can multi-modal models run on air-gapped networks?

Yes. NTS provides multi-modal AI infrastructure for air-gapped classified environments with all model components (encoders, fusion layers, tokenizers) deployed within the secure enclave.