Retrieval-Augmented Generation Infrastructure: Complete D…

Quick Summary

- Components: Embedding model, vector database, LLM, orchestration

- Vector DB: Pinecone, Weaviate, Milvus, Qdrant for similarity search

- GPU Requirement: 1-4x GPUs for embedding + generation

- Latency: RAG adds 100-500ms to end-to-end query time

- Government: RAG enables secure AI on classified documents

RAG Infrastructure: Complete Deployment Guide

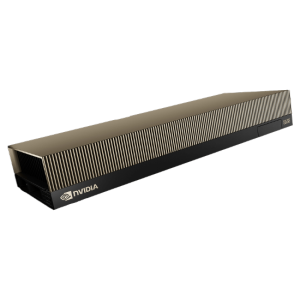

Retrieval-Augmented Generation MI300X server (RAG) combines the knowledge retrieval capabilities of vector databases with the generative power of large language models, enabling AI systems to access and reason over private or domain-specific knowledge without fine-tuning. RAG infrastructure requires a carefully orchestrated architecture spanning document processing, embedding generation, vector storage, retrieval routing, and LLM inference.

RAG Architecture Components

A production RAG system consists of four primary components connected through an orchestration layer. The ingestion pipeline processes documents through chunking, embedding, and indexing into a vector database. The retrieval system performs similarity search against the vector index to find relevant context. The LLM inference engine generates responses based on retrieved context plus user query. The orchestration layer manages conversation state, query routing, and response formatting.

GPU Requirements for RAG

RAG systems have distinct GPU requirements for embedding and generation stages. Embedding models (e.g., E5-mistral, BGE, OpenAI Ada) need modest GPU resources—a single L4 or L40S handles embedding of thousands of documents per minute. The LLM serving GPU depends on model size: Llama 3 8B fits on a single L4, while Llama 3 70B requires 2-8 H100 GPUs depending on throughput requirements.

Government RAG Deployment

RAG is particularly valuable for government applications requiring AI access to classified or controlled documents. By keeping both the vector database and LLM on-premise, RAG systems provide AI capabilities on sensitive data without any information leaving organizational control. NTS provides integrated RAG infrastructure with encrypted vector databases and secure LLM serving in air-gapped configurations.

Related Content

Explore more about this topic:

Frequently Asked QuestionsWhat vector database is best for enterprise RAG?

Milvus, Pinecone, Weaviate, and Qdrant are the leading vector databases. For on-premise government deployments, Milvus (open-source, Kubernetes-native) or Weaviate (with built-in encryption) are recommended for their self-hosted deployment capabilities.

Does RAG require GPU acceleration?

RAG benefits from GPU acceleration at two points: embedding generation (GPU reduces latency by 10-50x vs CPU) and LLM inference (GPU essential for acceptable response times). The vector database itself typically runs on CPU with GPU acceleration options in some platforms.