NVIDIA H200 NVL Deep Dive: Memory and Bandwidth for Large…

Quick Summary

- Memory: 141GB HBM3e per GPU, 76% more than H100 80GB

- Bandwidth: 4.8 TB/s HBM3e vs H100 3.35 TB/s

- Best For: Large language models requiring >80GB per GPU

- Llama 3 70B: Fits entirely on single H200 NVL GPU

- Availability: Shipping via NTS GSA Schedule and SEWP V contracts

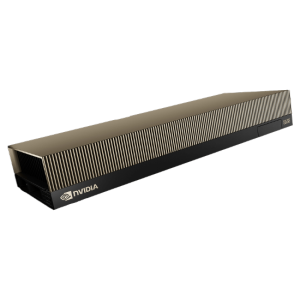

H200 NVL NVIDIA H200 NVL: NVIDIA's High-Capacity AI Inference GPU

The NVIDIA H200 NVL represents a strategic mid-cycle enhancement to the Hopper architecture, addressing the most pressing constraint in large language model deployment: GPU memory capacity. While the H200 shares the same GH100 chip architecture as the H100, its adoption of HBM3e memory technology enables a 76% increase in memory capacity and 43% increase in memory bandwidth, fundamentally changing what is possible on a single GPU.

For enterprise AI teams and federal agencies deploying increasingly large language models, the H200 NVL's 141GB of HBM3e memory is a game-changer. Models that previously required two GPUs with all the associated complexity of model parallelism can now fit on a single GPU, simplifying deployment, reducing latency, and improving reliability.

| Specification | H100 SXM | H200 NVL | Improvement |

|---|---|---|---|

| Memory Type | HBM3 | HBM3e | Enhanced HBM3 |

| Memory Capacity | 80 GB | 141 GB | +76% |

| Memory Bandwidth | 3.35 TB/s | 4.8 TB/s | +43% |

| NVLink Bandwidth | 900 GB/s | 900 GB/s | Same |

| AI TFLOPS (FP8) | 3,958 | 3,958 | Same |

| TDP | 700 W | 700 W | Same |

Memory Impact on LLM Serving

The H200 NVL's memory advantage directly enables more efficient LLM serving. Llama 3 70B in FP16 requires approximately 140GB of memory, fitting entirely on a single H200 NVL versus requiring two H100 GPUs with NVLink. This single-GPU deployment eliminates inter-GPU communication overhead, reducing inference latency by 30-50% for latency-sensitive applications.

For larger models, the H200 NVL reduces the number of GPUs required. Llama 3 405B requires 5 H200 NVL GPUs versus 8 H100 GPUs for FP16 inference, representing a 37.5% reduction in GPU count and corresponding savings in server cost, power consumption, and facility requirements.

Training Performance Considerations

While the H200 NVL maintains the same compute performance as H100 (3,958 TFLOPS FP8), its larger memory enables larger batch sizes during training, improving GPU utilization and training throughput. In practical terms, H200 NVL delivers 15-25% higher training throughput for memory-bound workloads without any software changes.

For federal research institutions and government AI labs, the H200 NVL's larger memory simplifies scaling of transformer-based models by reducing the need for complex model parallelism strategies. This translates to faster experiment iteration and reduced engineering overhead.

Related Content

Explore more about this topic:

- Enterprise GPU Memory Hierarchy

- What is Model Quantization?

- FP8 vs FP16 vs BF16 vs FP32: Precision Formats

Can existing H100 servers be upgraded to H200 NVL?

H200 NVL requires the same HGX baseboard form factor as H100. Servers designed for HGX H100 can typically accommodate H200 NVL with firmware updates. NTS provides upgrade assessment services for federal clients.

Is H200 NVL available through federal contract vehicles?

Yes, NTS offers H200 NVL-based configurations through GSA Schedule, SEWP V, and ITES-4H contracts with TAA-compliant manufacturing and FISMA-ready configurations.

What is the price premium for H200 over H100?

The H200 NVL typically carries a 20-30% price premium over equivalent H100 configurations. The TCO advantage depends on workload: for memory-bound models, the per-token cost can be 25-40% lower due to reduced GPU count.